interrupt() 函数来实现。该函数接受任何可 JSON 序列化的值,并将其返回给调用者。当您准备好继续时,通过使用 Command 重新调用图来恢复执行,该 Command 随后成为节点内部 interrupt() 调用的返回值。 与静态断点(在特定节点之前或之后暂停)不同,中断是动态的——它们可以放置在代码中的任何位置,并且可以根据您的应用程序逻辑进行条件设置。- 检查点保持您的位置:检查点写入精确的图状态,以便您以后可以恢复,即使在错误状态下也是如此。

thread_id是您的指针:设置config={"configurable": {"thread_id": ...}}告诉检查点要加载哪个状态。- 中断负载以

__interrupt__形式显示:您传递给interrupt()的值在__interrupt__字段中返回给调用者,以便您知道图正在等待什么。

thread_id 实际上是您的持久化游标。重复使用它会恢复相同的检查点;使用新值会启动一个全新的线程,状态为空。

使用 interrupt 暂停

interrupt 函数暂停图执行并向调用者返回值。当您在节点内调用 interrupt 时,LangGraph 保存当前图状态并等待您以输入恢复执行。 要使用 interrupt,您需要:- 一个检查点来持久化图状态(在生产环境中使用持久化检查点)

- 配置中的线程 ID,以便运行时知道从哪个状态恢复

- 在您想要暂停的地方调用

interrupt()(负载必须是可 JSON 序列化的)

interrupt 时,会发生以下情况:

- 图执行被暂停在调用

interrupt的确切位置 - 状态通过检查点保存,以便以后可以恢复执行。在生产环境中,这应该是一个持久化检查点(例如,由数据库支持)

- 值在

__interrupt__下返回给调用者;它可以是任何可 JSON 序列化的值(字符串、对象、数组等) - 图无限期等待,直到您以响应恢复执行

- 当您恢复时,响应会传回节点,成为

interrupt()调用的返回值

恢复中断

中断暂停执行后,您可以通过再次使用包含恢复值的Command 调用图来恢复它。恢复值将传回给 interrupt 调用,允许节点继续执行外部输入。

- 恢复时必须使用中断发生时使用的相同线程 ID

- 传递给

Command(resume=...)的值成为interrupt调用的返回值 - 当恢复时,节点会从调用

interrupt的节点开头重新启动,因此interrupt之前的任何代码都会再次运行 - 您可以传递任何可 JSON 序列化的值作为恢复值

常见模式

中断解锁的关键能力是暂停执行并等待外部输入。这对于各种用例都很有用,包括- 审批工作流:在执行关键操作(API 调用、数据库更改、金融交易)之前暂停

- 审查和编辑:让人类在继续之前审查和修改 LLM 输出或工具调用

- 中断工具调用:在执行工具调用之前暂停,以在执行前审查和编辑工具调用

- 验证人工输入:在进入下一步之前暂停以验证人工输入

批准或拒绝

中断最常见的用途之一是在关键操作之前暂停并请求批准。例如,您可能希望让人类批准 API 调用、数据库更改或任何其他重要决策。true 表示批准,false 表示拒绝

完整示例

完整示例

审查和编辑状态

有时您希望让人类在继续之前审查和编辑图状态的一部分。这对于纠正 LLM、添加缺失信息或进行调整很有用。完整示例

完整示例

工具中的中断

您还可以直接在工具函数中放置中断。这使得工具本身在被调用时暂停以进行批准,并允许在执行工具调用之前进行人工审查和编辑。 首先,定义一个使用interrupt 的工具:完整示例

完整示例

验证人工输入

有时您需要验证人类输入,如果无效则再次询问。您可以使用循环中的多个interrupt 调用来完成此操作。

完整示例

完整示例

中断规则

当您在节点内调用interrupt 时,LangGraph 通过引发一个信号运行时暂停的异常来暂停执行。此异常通过调用栈传播并被运行时捕获,运行时通知图保存当前状态并等待外部输入。 当执行恢复时(在您提供请求的输入之后),运行时从头开始重新启动整个节点——它不会从调用 interrupt 的确切行恢复。这意味着在 interrupt 之前运行的任何代码都将再次执行。因此,在使用中断时,需要遵循一些重要规则,以确保它们按预期行为。不要将 interrupt 调用包装在 try/except 中

interrupt 在调用点暂停执行的方式是抛出一个特殊异常。如果您将 interrupt 调用包装在 try/except 块中,您将捕获此异常,并且中断将不会传回给图。

- ✅ 将

interrupt调用与容易出错的代码分开 - ✅ 在 try/except 块中使用特定的异常类型

- 🔴 不要将

interrupt调用包装在裸 try/except 块中

不要重新排列节点内的 interrupt 调用

在单个节点中使用多个中断很常见,但如果不小心处理,这可能导致意外行为。 当节点包含多个中断调用时,LangGraph 会维护一个特定于执行节点的任务的恢复值列表。每当执行恢复时,它都会从节点的开头开始。对于遇到的每个中断,LangGraph 都会检查任务的恢复列表中是否存在匹配值。匹配是严格基于索引的,因此节点内中断调用的顺序很重要。- ✅ 保持

interrupt调用在节点执行中保持一致

不要在 interrupt 调用中返回复杂值

根据所使用的检查点,复杂值可能无法序列化(例如,您无法序列化函数)。为了使您的图能够适应任何部署,最佳实践是只使用可以合理序列化的值。

- ✅ 将简单、可 JSON 序列化的类型传递给

interrupt - ✅ 传递带有简单值的字典/对象

- 🔴 不要将函数、类实例或其他复杂对象传递给

interrupt

中断之前调用的副作用必须是幂等的

因为中断通过重新运行调用它们的节点来工作,所以在调用interrupt 之前调用的副作用应该(理想情况下)是幂等的。就上下文而言,幂等性意味着相同的操作可以多次应用,而不会改变初始执行之外的结果。 例如,您可能在节点内部有一个更新记录的 API 调用。如果在该调用之后调用了 interrupt,当节点恢复时,它将被多次重新运行,可能会覆盖初始更新或创建重复记录。

- 🔴 不要执行

interrupt之前的非幂等操作 - 🔴 在创建新记录之前,不要不检查它们是否存在

与作为函数调用的子图一起使用

在节点内调用子图时,父图将从调用子图并触发interrupt 的节点开头恢复执行。同样,子图也将从调用 interrupt 的节点开头恢复。

使用中断进行调试

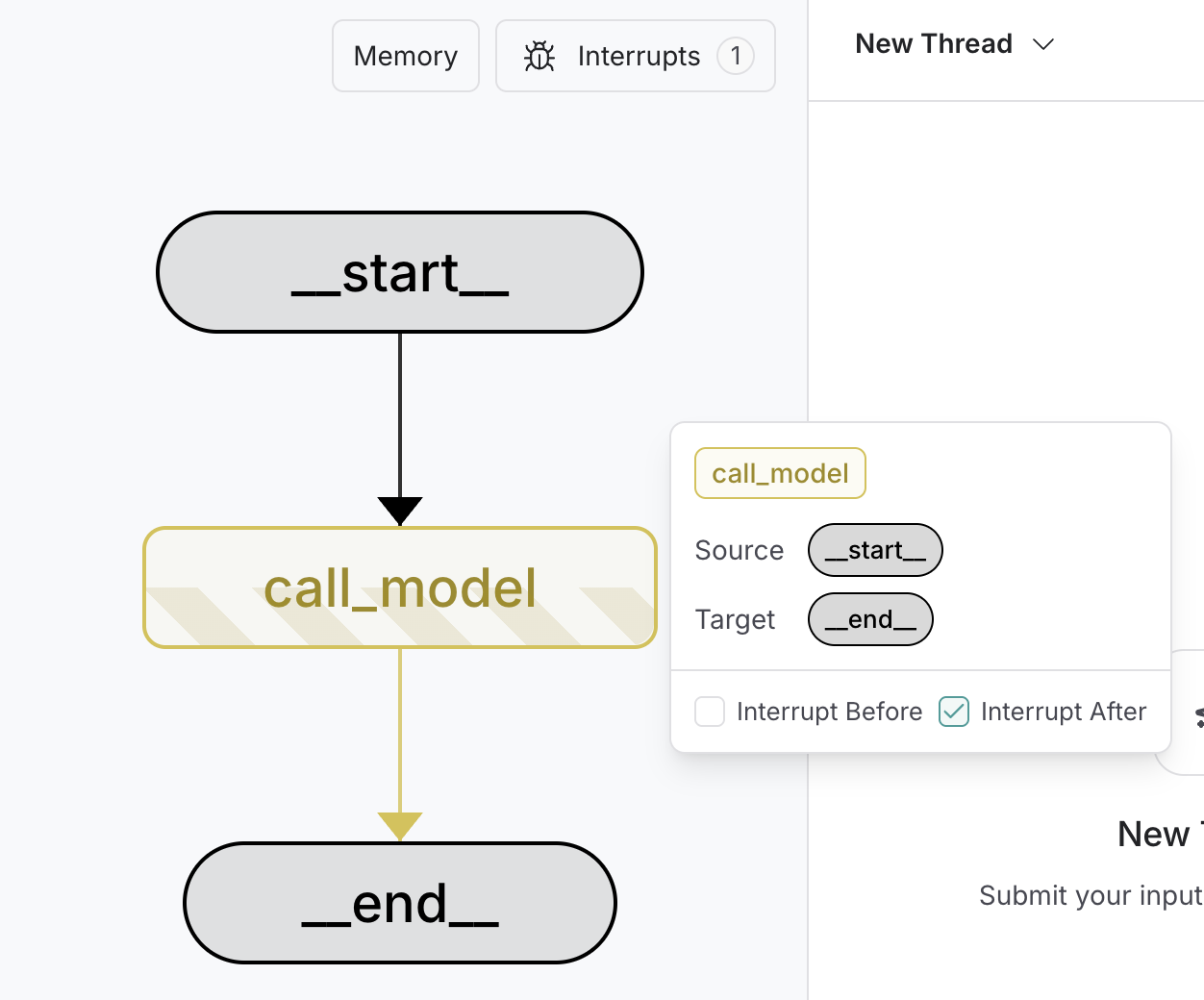

要调试和测试图,您可以使用静态中断作为断点,一次一个节点地逐步执行图。静态中断在节点执行之前或之后在定义点触发。您可以通过在编译图时指定interrupt_before 和 interrupt_after 来设置这些。

静态中断不建议用于人工介入工作流。请改用

interrupt 方法。- 编译时

- 运行时

- 断点在

compile时设置。 interrupt_before指定在节点执行之前应暂停执行的节点。interrupt_after指定在节点执行之后应暂停执行的节点。- 需要检查点才能启用断点。

- 图将运行直到遇到第一个断点。

- 图通过传入

None作为输入来恢复。这将运行图直到遇到下一个断点。

使用 LangGraph Studio

您可以使用LangGraph Studio在运行图之前在 UI 中设置图中的静态中断。您还可以使用 UI 在执行的任何点检查图状态。

以编程方式连接这些文档到 Claude、VSCode 等,通过 MCP 获取实时答案。